When conducting SEO audits for new clients, one of the most frequent issues we highlight is duplicate content. This isn’t because we’re pernickety pedants, it’s because avoiding duplication is of fundamental importance to how websites perform in organic search rankings.

Essentially, Google ‘reads’ your content to understand your website and determine what search terms you should rank for, but if you copy and paste the same wording across several pages, you’ll confuse the bots and shoot yourself in the foot.

Ultimately, they only want to return the most authoritative, trustworthy source of information to match searcher intent, but having too much of the same thing leads to disorientation, meaning the bots are unlikely to display any of your web pages at all, no matter how good your content may actually be.

At the end of the day, Google prides its user experience above all else, as it’s on a mission to remain synonymous with online search. Thus, if you have swathes of duplicate content plastered across your web presence, you’re not adding any value to the Internet at large, and your search rankings will suffer accordingly.

Here’s our guide to duplicate content rules, and how to avoid upsetting the Google gods.

How does duplicate content harm SEO?

One of the most common occurrences of duplicate content comes in the form of businesses who operate across several cities, with bespoke websites for each specific region. Often, these companies unknowingly hamper their efforts by using the same content across the board, changing only the geographic phrasing to keep each site relevant.

However, Google effectively views this as plagiarism, as each regional site is essentially copying the original source. This will usually lead to each of the sub-sites being heavily demoted in the search results, leaving only one site (the first one to be published) visible in the top pages of the SERPs. Not very helpful when you’re trying to maximise your efforts for local search results.

While businesses in this predicament may not have been outwardly trying to manipulate search engines, you can see how such a shortcut doesn’t hold up to scrutiny; copied content doesn’t add value to the wider conversation, and Google has no way of knowing whether you’re copying yourself or someone else.

Similarly, when we started working with Washware Essentials, we noticed they were running three separate versions of their website; the ‘www.’ version, the ‘non-www.’ version, and their development site were all live and accessible to the public. Google would view each instance of these pages (https://www.washwareessentials.co.uk/, https://washwareessentials.co.uk/ and https://dev.washwareessentials.co.uk/) as 100% duplicate versions, as it sees each URL variation as a totally separate domain. As such, Google had a hard job figuring out which was the best site to index and saw the site as riddled with duplicate content issues, meaning only 66 of their 400+ web pages were showing up in search results.

This is the most frequent cause of duplicate content penalties that we come across, however, after a few simple tweaks and ensuring only the ‘www.’ site was live, 90% of their web pages were indexed within a matter of weeks, with many pages ranking highly within the organic search results. You can read more about this project in our Washware Essentials case study.

Additionally, it’s common for blog archive, category and tag pages within sites to automatically copy snippets of content from each blog post, creating multiple duplicate paragraphs within one site. It’s much better to keep these excerpts unique and write brief overviews for each or to block the category and tag pages from being indexed.

So, in our experience, those suffering from duplication frustration are often completely unaware they’re either bending or breaking the rules. Google are aware of this, and they don’t issue penalties as such, but they do suppress or filter-out your pages if you don’t take care to create unique content, meaning you won’t gain traction in organic listings.

The one major caveat is where you’re legally obliged to have standardised text across several pages, e.g. terms and conditions for the products you sell. Again, Google understands there are areas where an element of duplication is unavoidable, so you don’t need to worry too much about this, as Matt Cutts, Google’s former Head of Webspam, outlines in this video clip:

If you’re still concerned, you could consider publishing your T’s & C’s on one standalone page, and linking to it from every applicable product page, perhaps with a short overview that paraphrases the most important message. Generally, T’s & C’s don’t aid the user experience (most people simply skim over or block them out) so, while still legally crucial, from a user perspective it may make sense to have a separate page away from the main content.

Main content is the key thing to bear in mind, as Google is really judging the main body of your web page, looking for large duplicated blocks of text, stuffed with keywords, which has been copied from elsewhere, rather than taking too much notice of boilerplate content at the bottom of pages.

As such, it pays to invest in high-quality unique content creation to ensure each web page is optimised for success, naturally earning attention from site visitors and being rewarded with healthy rankings in the SERPs.

Dealing with duplicate content

You can check for potential duplication issues within the metadata of your site by logging into your Google Search Console account and checking the HTML Improvements tab, detailing any issues the search bots have discovered whilst crawling your site, such as duplicate title tags or duplicate meta descriptions.

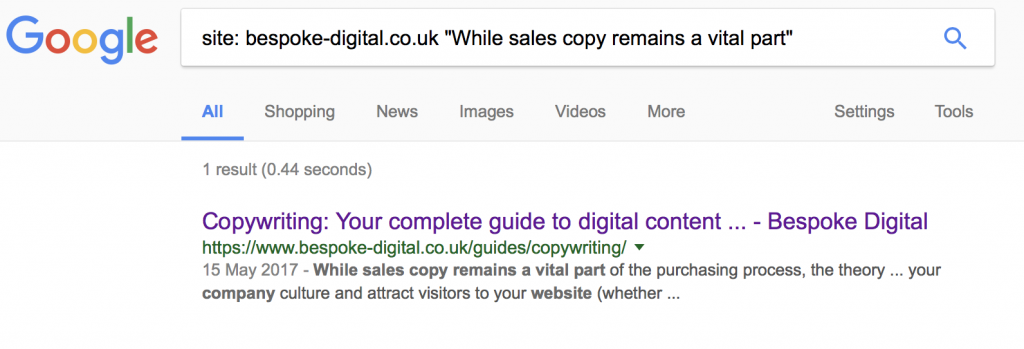

You can also use the ‘site:’ search operator to help identify text appearing on multiple URLs. For example, we could input a snippet of text from our guide to digital copywriting to see if it’s accidentally being indexed on variations of the same web page (‘www.’ and ‘non-www.’, ‘http:’ and ‘https:’, etc.:

Thankfully, the selected snippet we pasted from the guide (the text in quotes) only returns one search result, assuring us there are no duplication issues to worry about for this page.

It’s also a good idea to periodically paste a random passage of text from you site into Google and search for an exact match, allowing you to see whether it’s been duplicated, either on your site or plagiarised elsewhere on the web. This will also show where you may be duplicating copy on social media profiles. The authority source will always rank No.1, so as long as that’s your website there’s nothing to worry about.

If you do spot multiple versions of the same page, you should canonicalise them, i.e. tell Google which is your preferred URL. You can do this using the rel=”canonical” link element, and you can find more details about the process on Google’s canonical URL guidelines.

It’s important to be consistent with your internal links, taking care to ensure they’re all pointing to the correct canonical URL. We recently updated our site from ‘http:’ to ‘https:’ for greater security and stronger SEO (Google prefers ‘https:’ sites as they guarantee a safer user experience), and we had to initiate a number of 301 redirects to make sure any links (both internal and inbound) pointing to the old URL structure now automatically redirect to the new one. This means both search bots and real people see the correct content, safeguarding your site and maintaining your authority.

Beware copied content

In March 2017, Google published their latest Search Quality Evaluator Guidelines, and duplicate content isn’t mentioned at all. Copied content, however, is (p.30):

Important: The Lowest rating is appropriate if all or almost all of the MC (main content) on the page is copied with little or no time, effort, expertise, manual curation, or added value for users. Such pages should be rated Lowest, even if the page assigns credit for the content to another source.

Thus, if you’re not scrupulous about the words that make up your pages, you’re playing with fire and are likely to suffer low rankings in the dusty depths of the search engines.

Quoting somebody else’s work is fine, underlining your point and backing up your argument, but it’s considered best practice to link to the original source (as we have with Google above), referencing the creator, signalling you’re not claiming authorship or trying to manipulate search results in any way.

Generally, a quote will only make up a very small percentage of the content on the page, and Google takes the whole page into account; if the ratio of copied to unique content is minimal, you’ll have nothing to worry about.

There should always be an emphasis on adding value, so you should only quote other people’s work if you’re using it in a wider context, rather than simply plonking it on your page because it sounds good and you want visitors to think you’ve written it.

Not only could this end up in copyright lawsuits, it’ll also severely hamper your SEO. Google rewards uniqueness, so take time to research your blog posts properly instead of scraping the work of others.

Handy Tools

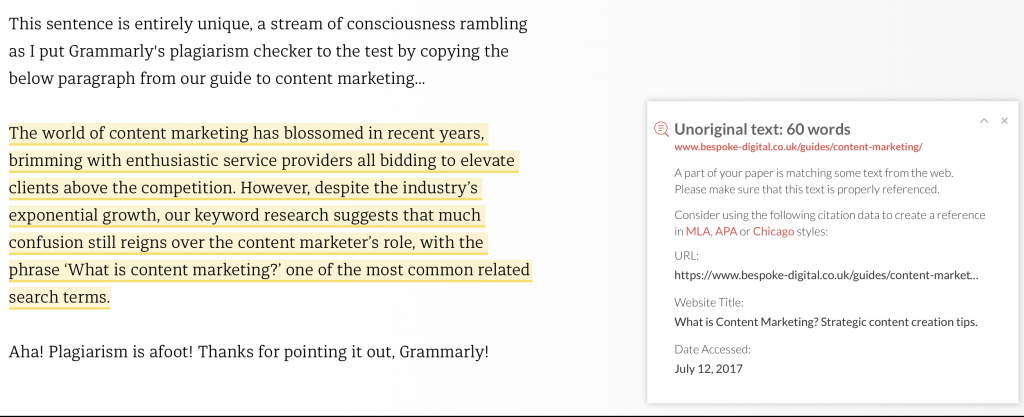

Grammarly is primarily billed as an application to help writers keep on course with word perfect spelling and grammar, but the premium version has a plagiarism checker that is invaluable when it comes to identifying copied content. Here’s a screenshot of it in action, as I copied a paragraph from our Insider’s guide to content marketing.

Using this feature can be especially helpful if you outsource your content writing, as it’ll flag up any potential issues your provider may be causing. If substantial amounts of text are copied, the search bots will likely filter your web pages out of their search results, and you don’t want to be caught unintentionally copying your competitor’s content.

Copyscape is another plagiarism checker that allows you to search for copies of your web page by simply typing in your URL. If you find that others are copying your content, or if you’ve unwittingly copied somebody else’s, you can take appropriate action to rectify the situation.

Copyscape also has a free comparison tool that allows you to compare two documents or web pages side-by-side, highlighting blocks of copied content and allowing you to make sufficient edits. Always try to add genuine value, though, rather than simply tweaking a few words here and there to give the appearance of unique content.

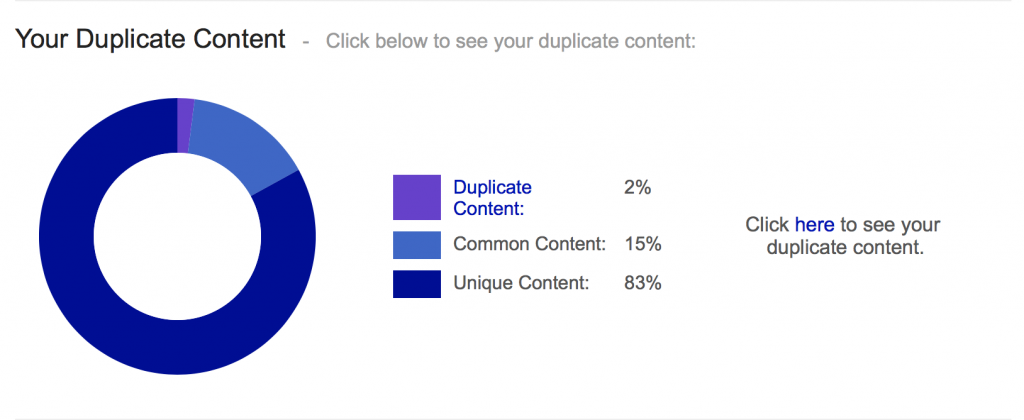

SiteLiner is great for conducting a quick website audit, showing where you have issues of duplicate content, broken links and poorly designed sitemaps.

Being able to click on the duplicate content section is great, giving you a clear visualisation of what areas may need attention.

What is ’near-duplicate’ content?

While the line between duplicate and copied content may be somewhat blurry, the issue of ‘near-duplicate’ content is something that Google is particularly keen to stamp out.

Gary Illyes, Google’s self-proclaimed Chief of Sunshine and Happiness, recently replied to a tweet to help clarify the term:

Think of it as a piece of content that was slightly changed, or if it was copied 1:1 but the boilerplate is different

— Gary “鯨理” Illyes (@methode) June 11, 2017

So while it is possible to tweak things, carefully swapping words with the help of a thesaurus or re-arranging content in some way, Google is getting much better at spotting ‘spun’ web content, i.e. that which is near-duplicate.

The Panda and Penguin algorithm updates have made it essential to put quality above quantity, so it’s no longer acceptable to partake in shortcuts that may have once elevated your search rankings.

You have to be unique if you want to pique interest and get the approval of Google, so make sure your content is up to scratch.

If you’re frustrated by your web results and feel you can do better, please call us on 0117 230 6010 or email enquiries@bespoke-digital.co.uk to have a chat and arrange your FREE Digital Health Check.

If you have any comments or questions about this post, or would like to discuss a specific issue with your site, please get in touch using the form below.

And connect with us on social media to stay upto date with our latest news: